Traditional enterprise workflow automation has been succeeding at scale for decades now. Trillion-dollar investment firms merged global siloed teams into a single, hyper-efficient shared services team. Compliance offices at energy giants replaced months-long backlogs of permits and approvals with real-time, airtight systems reporting on thousands of metrics across multiple countries.

But now, the industry has reached an inflection point that changes the conversation entirely.

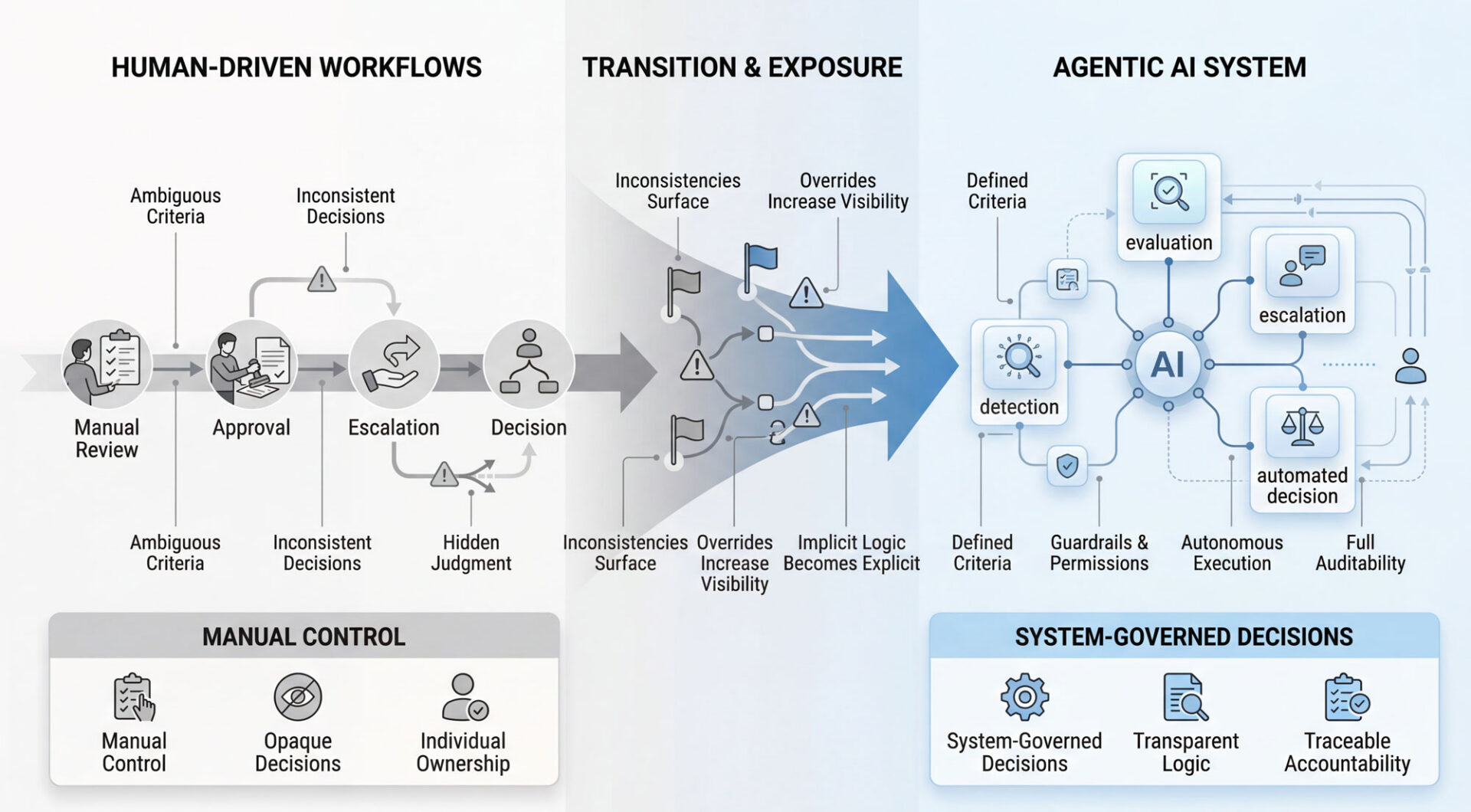

Agentic AI represents something fundamentally different from traditional automation. It’s not enough anymore for automations to just follow rules. Autonomous AI agents now work together to evaluate data, detect blockers, classify issues, and recommend actions without waiting for human approval.

And it’s no longer just a future possibility; the evidence is operational.

But here’s the critical gap: when it comes to workflow, most organizations aren’t ready to let the system think for itself.

The Shift You Should Be Thinking About

…because your competitors are.

Traditional automation follows instructions. If X happens, do Y. Simple and predictable.

Agentic AI breaks that model.

Consider a recurring task health evaluation. A job task routes to an AI agent on a defined cadence (daily, weekly, etc.). The agent reviews parts availability, progress completed, time logged, due dates. It detects potential blockers. If it finds a problem, it classifies the issue, recommends escalation, assesses due date impact – no human involved.

The agent operates within explicit boundaries and permissions. But within those boundaries, it’s autonomous.

It’s important to point out here that one of the key risks of agentic systems is failure to establish these boundaries in the first place. With a built-in suite of security considerations including explicit data boundaries, permission handling, and system-level controls, HighGear has mitigated these risks while allowing users to leverage the power of agentic AI in their workflows. More on this here.

By the end of 2026, roughly 40% of business workflows won’t be managed by humans clicking buttons, but by agentic AI systems that can plan, execute, and course-correct in real-time.

The magnitude of this shift is kind of a big deal.

88% of senior executives have already approved bigger AI budgets specifically to move from automation to autonomy. The capital commitment signals this isn’t experimental anymore, it is operational reality.

The Decision Logic Problem

Often, great theories bog down when they meet real operational friction and this case is no different.

Most organizations can barely agree on approval thresholds for expense reports. How do you configure an AI agent’s decision-making criteria when the humans themselves haven’t clearly defined what constitutes a “blocker” or when escalation should happen?

Agentic AI doesn’t replace decision-making maturity. It exposes and accelerates it.

An agentic workflow step isn’t deciding what should be approved in a philosophical sense. It’s executing against defined criteria, patterns, and constraints provided by the organization.

If those criteria are vague or inconsistent, the AI doesn’t magically resolve that ambiguity. It makes it visible.

As the agent evaluates each task, it applies the defined rules against real inputs like data, timing, thresholds, dependencies, etc. When it encounters scenarios that technically meet the criteria but produce unexpected or conflicting outcomes, those decisions are surfaced through logs, flags, and escalation patterns.

Soon, patterns emerge. Similar cases are handled differently. Certain edge cases trigger repeated overrides. Decisions that appear valid on paper consistently raise concern in practice.

What was previously buried in human judgment becomes observable in system behavior. Implicit decision logic becomes explicit. Not because the AI interprets ambiguity, but because it exposes where the rules fail to account for reality.

In doing so, the system creates a repeatable framework that can now be measured and refined over time.

This is where many organizations feel friction for the first time.

The goal shouldn’t be to define perfect rules upfront, but to establish guardrails.

A practical approach starts with clear hard stops: compliance violations, spending above X threshold. Then defined safe approvals: routine, low-risk transactions. Then escalation triggers for anything in between.

The AI operates confidently in the center, where consensus already exists. It defers to humans at the edges, creating the exact feedback loop needed to refine the model over time.

The Moment of Exposure

Rarely ever does it arrive as a sudden realization.

It’s more like coming home one day and seeing the house is a disaster and needs some serious TLC after you’ve left one thing here, one thing there without realizing it’s all been piling up.

Most organizations start with a controlled use case like expense approvals, vendor onboarding and credentialing, or change requests. They configure an AI agent to follow the process they think they have.

Reality then enters the room and the red flags show up.

Transactions that meet the criteria but just feel wrong to reviewers. Approvals that differ depending on who previously handled them. Edge cases no one accounted for but happen more frequently than anyone thought.

Agents make mistakes. So any system must have tools to allow humans to review, modify, or override the AI’s behavior.

Every exception must be visible and trackable, not invisible judgment calls.

When everything is visible, people can have conversations about “why did this happen,” and can clarify and improve the process. Ownership shifts safely from individuals to the system. And the narrative can change from “the AI got this wrong” to “our rule didn’t account for this scenario.”

When the goal shifts from perfection to visibility, the system quickly reveals where logic is inconsistent or insufficiently documented. What feels like friction at first quickly takes shape into alignment, efficiency, and operational scalability.

The Identity Problem

People defend their manual approval authority like it’s their identity.

Someone who thinks their job is “approving expenses” will feel threatened. Someone who understands they’re responsible for making good financial decisions that align with company policy sees the opportunity differently.

This is a conversation about task completion versus operational ownership and accelerated outcomes.

Decision makers are often overloaded with low-value decisions like routine approvals and repetitive checks. Reframing AI as a filter rather than a replacement preserves their status and improves leverage. They’re freed up to maximize their value because now they’re governing the important decisions at scale.

The shift in perspective may initially make their current role feel smaller, but their actual value will be bigger.

Their value isn’t in touching every decision. It’s ensuring every decision is made correctly while giving them the capacity to make critical decisions more effectively than they could have done under their previous workload.

When configured correctly, agentic systems don’t remove authority. Oversight is explicit and visible. Process owners can see what the AI is doing. They can override it. They can fully configure and adjust it.

But agentic systems do remove the need for them to exercise that responsibility over each and every low-value transaction.

The Pressure of Visibility

So now the system is in place. Suddenly, every exception and override is now visible and trackable.

Decision-makers have to justify why they went against what the system recommended. That justification lives in a record somewhere.

The pressure isn’t new. It’s newly visible.

If handled well, overrides become signals. Justifications become inputs. The organization (and therefore the system) learns from them, giving even more voice to the decision makers.

A well-designed system can protect the decision-maker as much as it may expose them. When decisions are tracked, it’s easy to show why a call was made, and what context the system didn’t have. That’s a stronger position to be in when auditors eventually question a decision.

The real risk is cultural, not technical.

If leadership treats overrides as errors to eliminate or aggressively question, people will feel like they’re under a microscope. But if they are seen as feedback loops and opportunities to refine policy, the scenario changes entirely.

The difference comes down to whether the organization is trying to blindly enforce their idea of compliance or continuously improve the quality of the decisions being made for the long term.

When It Goes Wrong

Inevitably, organizations will get this wrong.

It seems pretty reasonable at first. “If the AI is configured correctly, overrides should be rare.”

Things start getting measured like override frequency by user, teams with the most deviations, etc., and subtly (or not so subtly) judgment is attached.

But behavior quietly shifts away from better decisions and toward safer optics.

Users get led into a mindset of “I could override this but I don’t want to explain it.” Observers may think that overrides decreased because the system is more accurate. But they actually decreased because users are afraid to challenge the system.

You end up with a system that looks compliant on paper but is actually making worse decisions because people are afraid to use their judgment.

Many so-called agentic initiatives are actually automation use cases in disguise. Enterprises often apply agents where simpler tools would suffice, resulting in poor ROI. This “agent washing” compounds the problem.

The Detection Problem

How does an organization detect this before something breaks in a consequential way?

If it’s too good to be true, it usually is. Rarely is a system perfect.

Some level of friction must be expected. It is a good sign that a process is working as intended. This is why agility is already becoming the differentiator between successful and unsuccessful applications of agentic AI.

A healthy system has a pulse. A flatline is suspicious.

A competent organization needs to compare historical trends, behavior, and data against a new system to highlight potential red flags:

- Different departments with historically different patterns suddenly behaving identically

- Your most valued decision-makers suddenly providing less feedback than your rookies

- Override rates that drop to near zero within weeks of deployment

- Escalation patterns that become perfectly uniform across teams

Complete consistency might sound like a win, but ultimately expertise should create informed variation, not eliminate it entirely.

The Governance Gap

Enterprises are moving quickly toward agentic AI, but many are hitting a wall.

Research from IBM and Morning Consult found that 99% of enterprise AI developers are

They’re trying to automate existing processes designed by and for human workers without reimagining how the work should actually be done and failing to build clear pathways and controls to support the escalation, handling, and analysis of operational exceptions. Leading organizations are discovering that true value comes from redesigning operations, not just layering agents onto old workflows.

Take invoice approvals for example. In a traditional workflow, invoices are routed step-by-step to specific individuals for review and sign-off. Many organizations attempt to insert AI into this process by having it “assist” each approval step. But leading teams take a different approach—they allow the agent to evaluate the invoice holistically, apply policy rules, flag anomalies, and only escalate when something falls outside defined thresholds. The process isn’t just faster. It’s fundamentally different.

Traditional IT governance models don’t account for AI systems that make independent decisions and take actions. The fundamental challenge extends beyond technical control to questions about process redesign.

If an AI agent makes a bad call, who is responsible?

It can’t be the system. The system doesn’t own the decision. It just executes it.

Responsibility still sits with those who designed the process, defined the criteria, and set the boundaries the agent operates within. Is this IT’s domain, or should the business be responsible for these decisions and outcomes?

This is where many organizations fail to understand the shift. As systems become more autonomous, accountability doesn’t disappear; it becomes more structured.

Because these systems act independently, the industry is scrambling to build verifiable audit trails for every action an agent takes. If you can’t track it, you can’t trust it. But tracking what happened is only part of the equation.

Accountability requires understanding why it happened and who defined the logic behind it.

Transparency is the new currency in workflow automation.

A well-designed system provides users with access to a detailed log of agent actions, including the data evaluated, the rules applied, external tools used, and any supporting agents involved in reaching a decision.

But it doesn’t stop there.

It also creates a clear chain of ownership: who configured the rules, who approved the thresholds, who modified the logic over time.

Because when a decision is questioned, the goal isn’t to assign blame to the system. It’s to trace the outcome back to the human decisions that shaped it.

Trust is built not just in what the system does, but in how it was designed to do it.

What HighGear Gets Right

HighGear’s Agentic AI Workflow Node addresses several of these challenges directly.

It can be configured precisely to an organization’s needs. It has the intelligence to make decisions independently based on those criteria. But most importantly, it has the explicit boundaries and permissions that maintain enterprise governance and compliance.

HighGear’s agentic AI capabilities don’t assume organizations have perfect decision frameworks. It helps them uncover, structure, and continuously refine them.

And, with the robust capabilities of HighGear’s configurable workflow and audit trail, it’s easy to ensure that, no matter how intricately agentic AI tools are deployed into a system, ultimate control remains in the hands of real users.

The goal isn’t to replace human judgment. It’s to scale it where it’s already clear and elevate it where it’s not.

All teams face manual bottlenecks that slow down how work moves through the organization: a specific person needing to sign off on a task before it can move forward, members of a team waiting to be assigned work, etc. This new tool can alleviate blockers in these scenarios by having the power to be configured, the intelligence to make decisions independently, and the governance to do it safely.

The Path Forward

Organizations implementing agentic AI need to approach this as an evolution, not a revolution.

Start small. Choose a use case where the rules are relatively clear and the stakes are manageable. Configure the agent with explicit boundaries. Monitor overrides closely, not with the intention to eliminate them, but to learn from them.

Leaders – build a culture where overrides are signals, not failures.

Track not just what the AI decides, but why humans choose to intervene. Use that data to refine the system continuously.

Remember that giving workflows more autonomy requires more sophisticated governance, not less.

The very characteristics that make agentic AI powerful – autonomy, adaptability, and intelligence – also make agents more difficult to govern. One of the primary governance challenges is their ability to make decisions independently. This lack of human control makes it harder to ensure that AI agents act in a safe, fair, and ethical way.

Organizations report 30-50% process time reductions and improved accuracy when governance frameworks support autonomous execution. That’s not insignificant.

To implement these frameworks successfully, match the architecture to the business case. Give the system the smallest amount of freedom that still delivers the outcome.

The Real Question

The technology works, the evidence is clear, so what next?

Technology is not often the constraint in enterprise transformation. The constraints are things like culture, trust, and the willingness to honestly evaluate processes and logic.

The most important takeaway from this paper is the notion that agentic AI doesn’t solve those problems – it exposes them.

The organizations that succeed with autonomous workflow agents won’t be the ones with the best AI. They’ll be the ones with the clearest thinking about what decisions actually matter, who should make them, and how to build systems that amplify human judgment rather than replace it.

Workflows are about to start making their own decisions.

The real question isn’t whether you’re ready for it.

It’s whether you’re ready for what it reveals about how decisions actually get made in your organization.